Check out my first novel, midnight's simulacra!

Io uring

Introduced in 2019 (kernel 5.1) by Jens Axboe, io_uring (henceforth uring) is a system for providing the kernel with a schedule of system calls, and receiving the results as they're generated. Whereas epoll and kqueue support multiplexing, where you're told when you can usefully perform a system call using some set of filters, uring allows you to specify the system calls themselves (and dependencies between them), and execute the schedule at the kernel dataflow limit. It combines asynchronous I/O, system call polybatching, and flexible buffer management, and is IMHO the most substantial development in the Linux I/O model since Berkeley sockets (yes, I'm aware Berkeley sockets preceded Linux. Let's then say that it's the most substantial development in the UNIX I/O model to originate in Linux):

- Asynchronous I/O without the large copy overheads and restrictions of POSIX AIO

- System call batching/linking across distinct system calls

- Provide a buffer pool, and they'll be used as needed

- Both polling- and interrupt-driven I/O on the kernel side

The core system calls of uring are wrapped by the C API of liburing. Win32 added a very similar interface, IoRing, in 2020. In my opinion, uring ought largely displace epoll in new Linux code. FreeBSD seems to be sticking with kqueue, meaning code using uring won't run there, but neither did epoll (save through FreeBSD's somewhat dubious Linux compatibility layer). Both the system calls and liburing have fairly comprehensive man page coverage, including the io_uring.7 top-level page.

Rings

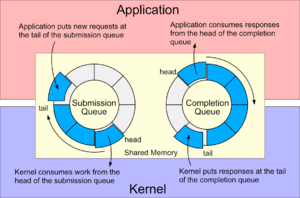

Central to every uring are two ringbuffers holding CQEs (Completion Queue Entries) and SQE (Submission Queue Entries) descriptors (as best I can tell, this terminology was borrowed from the NVMe specification; I've also seen it with Mellanox NICs). Note that SQEs are allocated externally to the SQ descriptor ring (the same is not true for CQEs). SQEs roughly correspond to a single system call: they are tagged with an operation type, and filled in with the values that would traditionally be supplied as arguments to the appropriate function. Userspace is provided references to SQEs on the SQE ring, which it fills in and submits. Submission operates up through a specified SQE, and thus all SQEs before it in the ring must also be ready to go (this is likely the main reason why the SQ holds descriptors to an external ring of SQEs: you can acquire SQEs, and then submit them out of order). The kernel places results in the CQE ring. These rings are shared between kernel- and userspace. The rings must be distinct unless the kernel specifies the IORING_FEAT_SINGLE_MMAP feature (see below).

uring does not generally make use of errno. Synchronous functions return the negative error code as their result. Completion queue entries have the negated error code placed in their res fields.

CQEs are usually 16 bytes, and SQEs are usually 64 bytes (but see IORING_SETUP_SQE128 and IORING_SETUP_CQE32 below). Either way, SQEs are allocated externally to the submission queue, which is merely a ring of 32-bit descriptors.

System calls

The liburing interface will be sufficient for most users, and it is possible to operate almost wholly without system calls when the system is busy. For the sake of completion, here are the three system calls implementing the uring core (from the kernel's io_uring/io_uring.c):

int io_uring_setup(u32 entries, struct io_uring_params *p);

int io_uring_enter(unsigned fd, u32 to_submit, u32 min_complete, u32 flags, const void* argp, size_t argsz);

int io_uring_register(unsigned fd, unsigned opcode, void *arg, unsigned int nr_args);

Note that io_uring_enter(2) corresponds more closely to the io_uring_enter2(3) wrapper, and indeed io_uring_enter(3) is defined in terms of the latter (from liburing's src/syscall.c):

static inline int __sys_io_uring_enter2(unsigned int fd, unsigned int to_submit,

unsigned int min_complete, unsigned int flags, sigset_t *sig, size_t sz){

return (int) __do_syscall6(__NR_io_uring_enter, fd, to_submit, min_complete, flags, sig, sz);

}

static inline int __sys_io_uring_enter(unsigned int fd, unsigned int to_submit,

unsigned int min_complete, unsigned int flags, sigset_t *sig){

return __sys_io_uring_enter2(fd, to_submit, min_complete, flags, sig, _NSIG / 8);

}

io_uring_enter(2) can both submit SQEs and wait until some number of CQEs are available. Its flags parameter is a bitmask over:

| Flag | Description |

|---|---|

| IORING_ENTER_GETEVENTS | Wait until at least min_complete CQEs are ready before returning. |

| IORING_ENTER_SQ_WAKEUP | Wake up the kernel thread created when using IORING_SETUP_SQPOLL. |

| IORING_ENTER_SQ_WAIT | Wait until at least one entry is free in the submission ring before returning. |

| IORING_ENTER_EXT_ARG | (Since Linux 5.11) Interpret sig to be a io_uring_getevents_arg rather than a pointer to sigset_t. This structure can specify both a sigset_t and a timeout.

struct io_uring_getevents_arg {

__u64 sigmask;

__u32 sigmask_sz;

__u32 pad;

__u64 ts;

};

Is ts nanoseconds from now? From the Epoch? Nope! ns is actually a pointer to a __kernel_timespec, passed to u64_to_user_ptr() in the kernel. One of the uglier aspects of uring. |

| IORING_ENTER_REGISTERED_RING | ring_fd is an offset into the registered ring pool rather than a normal file descriptor. |

Setup

The io_uring_setup(2) system call returns a file descriptor, and accepts two parameters, u32 entries and struct io_uring_params *p:

int io_uring_setup(u32 entries, struct io_uring_params *p);

struct io_uring_params {

__u32 sq_entries; // number of SQEs, filled by kernel

__u32 cq_entries; // see IORING_SETUP_CQSIZE and IORING_SETUP_CLAMP

__u32 flags; // see "Flags" below

__u32 sq_thread_cpu; // see IORING_SETUP_SQ_AFF

__u32 sq_thread_idle; // see IORING_SETUP_SQPOLL

__u32 features; // see "Kernel features" below, filled by kernel

__u32 wq_fd; // see IORING_SETUP_ATTACH_WQ

__u32 resv[3]; // must be zero

struct io_sqring_offsets sq_off; // see "Ring structure" below, filled by kernel

struct io_cqring_offsets cq_off; // see "Ring structure" below, filled by kernel

};

resv must be zeroed out. In the absence of flags, the uring uses interrupt-driven I/O. Calling close(2) on the returned descriptor frees all resources associated with the uring.

io_uring_setup(2) is wrapped by liburing's io_uring_queue_init(3) and io_uring_queue_init_params(3). When using these wrappers, io_uring_queue_exit(3) should be used to clean up.

int io_uring_queue_init(unsigned entries, struct io_uring *ring, unsigned flags);

int io_uring_queue_init_params(unsigned entries, struct io_uring *ring, struct io_uring_params *params);

void io_uring_queue_exit(struct io_uring *ring);

These wrappers operate on a struct io_uring:

struct io_uring {

struct io_uring_sq sq;

struct io_uring_cq cq;

unsigned flags;

int ring_fd;

unsigned features;

int enter_ring_fd;

__u8 int_flags;

__u8 pad[3];

unsigned pad2;

};

struct io_uring_sq {

unsigned *khead;

unsigned *ktail;

unsigned *kring_mask; // Deprecated: use `ring_mask`

unsigned *kring_entries; // Deprecated: use `ring_entries`

unsigned *kflags;

unsigned *kdropped;

unsigned *array;

struct io_uring_sqe *sqes;

unsigned sqe_head;

unsigned sqe_tail;

size_t ring_sz;

void *ring_ptr;

unsigned ring_mask;

unsigned ring_entries;

unsigned pad[2];

};

struct io_uring_cq {

unsigned *khead;

unsigned *ktail;

unsigned *kring_mask; // Deprecated: use `ring_mask`

unsigned *kring_entries; // Deprecated: use `ring_entries`

unsigned *kflags;

unsigned *koverflow;

struct io_uring_cqe *cqes;

size_t ring_sz;

void *ring_ptr;

unsigned ring_mask;

unsigned ring_entries;

unsigned pad[2];

};

io_uring_queue_init(3) takes an unsigned flags argument, which is passed as the flags field of io_uring_params. io_uring_queue_init_params(3) takes a struct io_uring_params* argument, which is passed through directly to io_uring_setup(2). It's best to avoid mixing the low-level API and that provided by liburing.

Ring structure

The details of ring structure are typically only relevant when using the low-level API. Two offset structs are used to prepare and control the three (or two, see IORING_FEAT_SINGLE_MMAP) backing memory maps.

struct io_sqring_offsets {

__u32 head;

__u32 tail;

__u32 ring_mask;

__u32 ring_entries;

__u32 flags; // IORING_SQ_*

__u32 dropped;

__u32 array;

__u32 resv1;

__u64 user_addr; // sqe mmap for IORING_SETUP_NO_MMAP

};

struct io_cqring_offsets {

__u32 head;

__u32 tail;

__u32 ring_mask;

__u32 ring_entries;

__u32 overflow;

__u32 cqes;

__u32 flags; // IORING_CQ_*

__u32 resv1;

__u64 user_addr; // sq/cq mmap for IORING_SETUP_NO_MMAP

};

As explained in the io_uring_setup(2) man page, the submission queue can be mapped thusly:

mmap(0, sq_off.array + sq_entries * sizeof(__u32), PROT_READ|PROT_WRITE,

MAP_SHARED|MAP_POPULATE, ring_fd, IORING_OFF_SQ_RING);

The submission queue contains the internal data structure followed by an array of SQE descriptors. These descriptors are 32 bits each no matter the architecture, implying that they are indices into the SQE map, not pointers. The SQEs are allocated:

mmap(0, sq_entries * sizeof(struct io_uring_sqe), PROT_READ|PROT_WRITE,

MAP_SHARED|MAP_POPULATE, ring_fd, IORING_OFF_SQES);

and finally the completion queue:

mmap(0, cq_off.cqes + cq_entries * sizeof(struct io_uring_cqe), PROT_READ|PROT_WRITE,

MAP_SHARED|MAP_POPULATE, ring_fd, IORING_OFF_CQ_RING);

Recall that when the kernel expresses IORING_FEAT_SINGLE_MMAP, the submission and completion queues can be allocated in one mmap(2) call.

Setup flags

The flags field is set up by the caller, and is a bitmask over:

| Flag | Kernel version | Description |

|---|---|---|

| IORING_SETUP_IOPOLL | 5.1 | Instruct the kernel to use polled (as opposed to interrupt-driven) I/O. This is intended for block devices, and requires that O_DIRECT was provided when the file descriptor was opened. |

| IORING_SETUP_SQPOLL | 5.1 (5.11 for full features) | Create a kernel thread to poll on the submission queue. If the submission queue is kept busy, this thread will reap SQEs without the need for a system call. If enough time goes by without new submissions, the kernel thread goes to sleep, and io_uring_enter(2) must be called to wake it. Cannot be used with IORING_SETUP_COOP_TASKRUN, IORING_SETUP_TASKRUN_FLAG, or IORING_SETUP_DEFER_TASKRUN. |

| IORING_SETUP_SQ_AFF | 5.1 | Only meaningful with IORING_SETUP_SQPOLL. The poll thread will be bound to the core specified in sq_thread_cpu. |

| IORING_SETUP_CQSIZE | 5.1 | Create the completion queue with cq_entries entries. This value must be greater than entries, and might be rounded up to the next power of 2. |

| IORING_SETUP_CLAMP | 5.1 | Clamp entries at IORING_MAX_ENTRIES and cq_entries at IORING_MAX_CQ_ENTRIES. |

| IORING_SETUP_ATTACH_WQ | 5.1 | Specify a uring in wq_fd, and the new uring will share that uring's worker thread backend. |

| IORING_SETUP_R_DISABLED | 5.10 | Start the uring disabled, requiring that it be enabled with io_uring_register(2). |

| IORING_SETUP_SUBMIT_ALL | 5.18 | Continue submitting SQEs from a batch even after one results in error. |

| IORING_SETUP_COOP_TASKRUN | 5.19 | Don't interrupt userspace processes to indicate CQE availability. It's usually desirable to allow events to be processed at arbitrary kernelspace transitions, in which case this flag can be provided to improve performance. Cannot be used with IORING_SETUP_SQPOLL. |

| IORING_SETUP_TASKRUN_FLAG | 5.19 | Requires IORING_SETUP_COOP_TASKRUN or IORING_SETUP_DEFER_TASKRUN. When completions are pending awaiting processing, the IORING_SQ_TASKRUN flag will be set in the submission ring. This will be checked by io_uring_peek_cqe(), which will enter the kernel to process them. Cannot be used with IORING_SETUP_SQPOLL. |

| IORING_SETUP_SQE128 | 5.19 | Use 128-byte SQEs, necessary for NVMe passthroughs using IORING_OP_URING_CMD. |

| IORING_SETUP_CQE32 | 5.19 | Use 32-byte CQEs, necessary for NVMe passthroughs using IORING_OP_URING_CMD. |

| IORING_SETUP_SINGLE_ISSUER | 6.0 | Hint to the kernel that only a single thread will submit requests, allowing for optimizations. This thread must either be the thread which created the ring, or (iff IORING_SETUP_R_DISABLED is used) the thread which enables the ring. |

| IORING_SETUP_DEFER_TASKRUN | 6.1 | Requires IORING_SETUP_SINGLE_ISSUER. Don't process completions at arbitrary kernel/scheduler transitions, but only io_uring_enter(2) when called with IORING_ENTER_GETEVENTS by the thread that submitted the SQEs. Cannot be used with IORING_SETUP_SQPOLL. |

| IORING_SETUP_NO_MMAP | 6.5 | The caller will provide two memory regions (the queue rings in cq_off.user_data, the SQEs in sq_off.user_data) rather than having them allocated by the kernel (they may both come from a single map). This allows for the use of huge pages. |

| IORING_SETUP_REGISTERED_FD_ONLY | 6.6 | Registers the ring fd for use with IORING_REGISTER_USE_REGISTERED_RING. Returns a registered fd index. |

When IORING_SETUP_R_DISABLED is used, the ring must be enabled before submissions can take place. If using the liburing API, this is done via io_uring_enable_rings(3):

int io_uring_enable_rings(struct io_uring *ring);

Kernel features

Various functionality was added to the kernel following the initial release of uring, and thus not necessarily available to all kernels supporting the basic system calls. The __u32 features field of the io_uring_params parameter to io_uring_setup(2) is filled in with feature flags by the kernel, a bitmask over:

| Feature | Kernel version | Description |

|---|---|---|

| IORING_FEAT_SINGLE_MMAP | 5.4 | A single mmap(2) can be used for both the submission and completion rings. |

| IORING_FEAT_NODROP | 5.5 (5.19 for full features) | Completion queue events are not dropped. Instead, submitting results in -EBUSY until completion reaping yields sufficient room for the overflows. As of 5.19, io_uring_enter(2) furthermore returns -EBADR rather than waiting for completions. |

| IORING_FEAT_SUBMIT_STABLE | 5.5 | Data submitted for async can be mutated following submission, rather than only following completion. |

| IORING_FEAT_RW_CUR_POS | 5.6 | Reading and writing can provide -1 for offset to indicate the current file position. |

| IORING_FEAT_CUR_PERSONALITY | 5.6 | Assume the credentials of the thread calling io_uring_enter(2), rather than the thread which created the uring. Registered personalities can always be used. |

| IORING_FEAT_FAST_POLL | 5.7 | Internal polling for data/space readiness is supported. |

| IORING_FEAT_POLL_32BITS | 5.9 | IORING_OP_POLL_ADD accepts all epoll flags, including EPOLLEXCLUSIVE. |

| IORING_FEAT_SQPOLL_NONFIXED | 5.11 | IORING_SETUP_SQPOLL doesn't require registered files. |

| IORING_FEAT_ENTER_EXT_ARG | 5.11 | io_uring_enter(2) supports struct io_uring_getevents_arg. |

| IORING_FEAT_NATIVE_WORKERS | 5.12 | Async helpers use native workers rather than kernel threads. |

| IORING_FEAT_RSRC_TAGS | 5.13 | Registered buffers can be updated in partes rather than in toto. |

| IORING_FEAT_CQE_SKIP | 5.17 | IOSQE_CQE_SKIP_SUCCESS can be used to inhibit CQE generation on success. |

| IORING_FEAT_LINKED_FILE | 5.17 | Defer file assignment until execution of a given request begins. |

| IORING_FEAT_REG_REG_RING | 6.3 | Ring descriptors can be registered with IORING_REGISTER_USE_REGISTERED_RING. |

Registered resources

Various resources can be registered with the kernel side of the uring. Registration always occurs via the io_uring_register(2) system call, multiplexed on unsigned opcode. If opcode contains the bit IORING_REGISTER_USE_REGISTERED_RING, the fd passed must be a registered ring descriptor.

Rings

Registering the uring's file descriptor (as returned by io_uring_setup(2)) allows for less overhead in io_uring_enter(2) when called with IORING_REGISTER_RING_FDS. If more than one thread is to use the uring, each thread must distinctly register the ring fd.

| Flag | Kernel | Description |

|---|---|---|

| IORING_REGISTER_RING_FDS | 5.18 | Register this ring descriptor for use by this thread. |

| IORING_UNREGISTER_RING_FDS | 5.18 | Unregister the registered ring descriptor. |

Buffers

Since Linux 5.7, user-allocated memory can be provided to uring in groups of buffers (each with a group ID), in which each buffer has its own ID. This was done with the io_uring_prep_provide_buffers(3) call, operating on an SQE. Since 5.19, the "ringmapped buffers" technique (io_uring_register_buf_ring(3)) allows these buffers to be used much more effectively.

| Flag | Kernel | Description |

|---|---|---|

| IORING_REGISTER_BUFFERS | 5.1 | |

| IORING_UNREGISTER_BUFFERS | 5.1 | |

| IORING_REGISTER_BUFFERS2 | 5.13 | struct io_uring_rsrc_register {

__u32 nr;

__u32 resv;

__u64 resv2;

__aligned_u64 data;

__aligned_u64 tags;

};

|

| IORING_REGISTER_BUFFERS_UPDATE | 5.13 | struct io_uring_rsrc_update2 {

__u32 offset;

__u32 resv;

__aligned_u64 data;

__aligned_u64 tags;

__u32 nr;

__u32 resv2;

};

|

| IORING_REGISTER_PBUF_RING | 5.19 | struct io_uring_buf_reg {

__u64 ring_addr;

__u32 ring_entries;

__u16 bgid;

__u16 pad;

__u64 resv[3];

};

|

| IORING_UNREGISTER_PBUF_RING | 5.19 |

Registered file descriptors

Registered (sometimes "direct") descriptors are integers corresponding to private file handle structures internal to the uring, and can be used anywhere uring wants a file descriptor through the IOSQE_FIXED_FILE flag. They have less overhead than true file descriptors, which use structures shared among threads. Note that registered files are required for submission queue polling unless the IORING_FEAT_SQPOLL_NONFIXED feature flag was returned. Registered files can be passed between rings using io_uring_prep_msg_ring_fd(3).

int io_uring_register_files(struct io_uring *ring, const int *files, unsigned nr_files);

int io_uring_register_files_tags(struct io_uring *ring, const int *files, const __u64 *tags, unsigned nr);

int io_uring_register_files_sparse(struct io_uring *ring, unsigned nr_files);

int io_uring_register_files_update(struct io_uring *ring, unsigned off, const int *files, unsigned nr_files);

int io_uring_register_files_update_tag(struct io_uring *ring, unsigned off, const int *files, const __u64 *tags, unsigned nr_files);

int io_uring_unregister_files(struct io_uring *ring);

| Flag | Kernel | Description |

|---|---|---|

| IORING_REGISTER_FILES | 5.1 | |

| IORING_UNREGISTER_FILES | 5.1 | |

| IORING_REGISTER_FILES2 | 5.13 | |

| IORING_REGISTER_FILES_UPDATE | 5.5 (5.12 for all features) | |

| IORING_REGISTER_FILES_UPDATE2 | 5.13 | |

| IORING_REGISTER_FILE_ALLOC_RANGE | 6.0 | struct io_uring_file_index_range {

__u32 off;

__u32 len;

__u64 resv;

};

|

Personalities

| Flag | Kernel | Description |

|---|---|---|

| IORING_REGISTER_PERSONALITY | 5.6 | |

| IORING_UNREGISTER_PERSONALITY | 5.6 |

NAPI settings

| Flag | Kernel | Description |

|---|---|---|

| IORING_REGISTER_NAPI | ||

| IORING_UNREGISTER_PERSONALITY |

Submitting work

Submitting work consists of four steps:

- Acquiring free SQEs

- Filling in those SQEs

- Placing those SQEs at the tail of the submission queue

- Submitting the work, possibly using a system call

The SQE structure

struct io_uring_sqe has several large unions which I won't reproduce in full here; consult liburing.h if you want the details. The instructive elements include:

struct io_uring_sqe {

__u8 opcode; /* type of operation for this sqe */

__u8 flags; /* IOSQE_ flags */

__u16 ioprio; /* ioprio for the request */

__s32 fd; /* file descriptor to do IO on */

... various unions for representing the request details ...

};

Flags can be set on a per-SQE basis using io_uring_sqe_set_flags(3), or writing to the flags field directly:

static inline

void io_uring_sqe_set_flags(struct io_uring_sqe *sqe, unsigned flags){

sqe->flags = (__u8) flags;

}

The flags are a bitfield over:

| SQE flag | Kernel | Description |

|---|---|---|

| IOSQE_FIXED_FILE | 5.1 | References a registered descriptor. |

| IOSQE_IO_DRAIN | 5.2 | Issue after in-flight I/O, and do not issue new SQEs before this completes. Incompatible with IOSQE_CQE_SKIP_SUCCESS anywhere in the same linked set. |

| IOSQE_IO_LINK | 5.3 | Links next SQE. Linked SQEs will not be started until this is done, and any unexpected result (errors, short reads, etc.) will fail all linked SQEs with -ECANCELED. |

| IOSQE_IO_HARDLINK | 5.5 | Same as IOSQE_IO_LINK, but a completion failure does not sever the chain (a submission failure still does). |

| IOSQE_ASYNC | 5.6 | Always operate asynchronously (operations which would not block are typically executed inline). |

| IOSQE_BUFFER_SELECT | 5.7 | Use a registered buffer. |

| IOSQE_CQE_SKIP_SUCCESS | 5.17 | Don't post a CQE on success. Incompatible with IOSQE_IO_DRAIN anywhere in the same linked set. |

ioprio includes the following flags:

| SQE ioprio flag | Description |

|---|---|

| IORING_RECV_MULTISHOT | |

| IORING_SEND_MULTISHOT | Introduced in 6.8. |

| IORING_ACCEPT_MULTISHOT | |

| IORING_RECVSEND_FIXED_BUF | Use a registered buffer. |

| IORING_RECVSEND_POLL_FIRST | Assume the socket in a recv(2)-like command does not have data ready, so an internal poll is set up immediately. |

Acquiring SQEs

If using the higher-level API, io_uring_get_sqe(3) is the primary means to acquire an SQE. If none are available, it will return NULL, and you should submit outstanding SQEs to free one up.

struct io_uring_sqe *io_uring_get_sqe(struct io_uring *ring);

If IORING_SETUP_SQPOLL was used to set up kernel-side SQ polling, IORING_ENTER_EXT_ARG can be used together with io_uring_enter(2) to block on an SQE becoming available.

Prepping SQEs

Each SQE must be seeded with the object upon which it acts (usually a file descriptor) and any necessary arguments. You'll usually also use the user data area.

User data

Each SQE provides 64 bits of user-controlled data which will be copied through to any generated CQEs. Since CQEs don't include the relevant file descriptor, you'll almost always be encoding some kind of lookup information into this area.

void io_uring_sqe_set_data(struct io_uring_sqe *sqe, void *user_data);

void io_uring_sqe_set_data64(struct io_uring_sqe *sqe, __u64 data);

void *io_uring_cqe_get_data(struct io_uring_cqe *cqe);

__u64 io_uring_cqe_get_data64(struct io_uring_cqe *cqe);

Here's an example C++ data type that encodes eight bits as an operation type, eight bits as an index, and forty-eight bits as other data. I typically use something like this to reflect the operation which was used, the index into some relevant data structure, and other information about the operation (perhaps an offset or a length). Salt to taste, of course.

union URingCtx {

struct rep {

rep(uint8_t op, unsigned ix, uint64_t d):

type(static_cast<URingCtx::rep::optype>(op)), idx(ix), data(d)

{

if(type >= MAXOP){

throw std::invalid_argument("bad uringctx op");

}

if(ix > MAXIDX){

throw std::invalid_argument("bad uringctx index");

}

if(d > 0xffffffffffffull){

throw std::invalid_argument("bad uringctx data");

}

}

enum optype: uint8_t {

...app-specific types...

MAXOP // shouldn't be used

} type: 8;

uint8_t idx: 8;

uint64_t data: 48;

} r;

uint64_t val;

static constexpr auto MAXIDX = 255u;

URingCtx(uint8_t op, unsigned idx, uint64_t d):

r(op, idx, d)

{}

URingCtx(uint64_t v):

URingCtx(v & 0xffu, (v >> 8u) & 0xffu, v >> 16u)

{}

};

The majority of I/O-related system calls have by now a uring equivalent (the one major exception of which I'm aware is directory listing; there seems to be no readdir(3)/getdents(2)). What follows is an incomplete list.

Just chillin'

// IORING_OP_NOP

void io_uring_prep_nop(struct io_uring_sqe *sqe);

Opening and closing file descriptors

// IORING_OP_OPENAT (5.15)

void io_uring_prep_openat(struct io_uring_sqe *sqe, int dfd, const char *path,

int flags, mode_t mode);

void io_uring_prep_openat_direct(struct io_uring_sqe *sqe, int dfd, const char *path,

int flags, mode_t mode, unsigned file_index);

// IORING_OP_OPENAT2 (5.15)

void io_uring_prep_openat2(struct io_uring_sqe *sqe, int dfd, const char *path,

int flags, struct open_how *how);

void io_uring_prep_openat2_direct(struct io_uring_sqe *sqe, int dfd, const char *path,

int flags, struct open_how *how, unsigned file_index);

// IORING_OP_ACCEPT (5.5)

void io_uring_prep_accept(struct io_uring_sqe *sqe, int sockfd, struct sockaddr *addr,

socklen_t *addrlen, int flags);

void io_uring_prep_accept_direct(struct io_uring_sqe *sqe, int sockfd, struct sockaddr *addr,

socklen_t *addrlen, int flags, unsigned int file_index);

void io_uring_prep_multishot_accept(struct io_uring_sqe *sqe, int sockfd, struct sockaddr *addr,

socklen_t *addrlen, int flags);

void io_uring_prep_multishot_accept_direct(struct io_uring_sqe *sqe, int sockfd, struct sockaddr *addr,

socklen_t *addrlen, int flags);

// IORING_OP_CLOSE (5.6, direct support since 5.15)

void io_uring_prep_close(struct io_uring_sqe *sqe, int fd);

void io_uring_prep_close_direct(struct io_uring_sqe *sqe, unsigned file_index);

// IORING_OP_SOCKET (5.19)

void io_uring_prep_socket(struct io_uring_sqe *sqe, int domain, int type,

int protocol, unsigned int flags);

void io_uring_prep_socket_direct(struct io_uring_sqe *sqe, int domain, int type,

int protocol, unsigned int file_index, unsigned int flags);

void io_uring_prep_socket_direct_alloc(struct io_uring_sqe *sqe, int domain, int type,

int protocol, unsigned int flags);

Reading and writing file descriptors

The multishot receives (io_uring_prep_recv_multishot(3) and io_uring_prep_recvmsg_multishot(3), introduced in Linux 6.0) require that registered buffers are used via IOSQE_BUFFER_SELECT, and preclude use of MSG_WAITALL. Meanwhile, use of IOSQE_IO_LINK with io_uring_prep_send(3) or io_uring_prep_sendmsg(3) requires MSG_WAITALL (despite MSG_WAITALL not typically being an argument to send(2)/sendmsg(2)).

// IORING_OP_SEND (5.6)

void io_uring_prep_send(struct io_uring_sqe *sqe, int sockfd, const void *buf, size_t len, int flags);

// IORING_OP_SEND_ZC (6.0)

void io_uring_prep_send_zc(struct io_uring_sqe *sqe, int sockfd, const void *buf, size_t len, int flags, int zc_flags);

// IORING_OP_SENDMSG (5.3)

void io_uring_prep_sendmsg(struct io_uring_sqe *sqe, int fd, const struct msghdr *msg, unsigned flags);

void io_uring_prep_sendmsg_zc(struct io_uring_sqe *sqe, int fd, const struct msghdr *msg, unsigned flags);

// IORING_OP_RECV (5.6)

void io_uring_prep_recv(struct io_uring_sqe *sqe, int sockfd, void *buf, size_t len, int flags);

void io_uring_prep_recv_multishot(struct io_uring_sqe *sqe, int sockfd, void *buf, size_t len, int flags);

// IORING_OP_RECVMSG (5.3)

void io_uring_prep_recvmsg(struct io_uring_sqe *sqe, int fd, struct msghdr *msg, unsigned flags);

void io_uring_prep_recvmsg_multishot(struct io_uring_sqe *sqe, int fd, struct msghdr *msg, unsigned flags);

// IORING_OP_READ (5.6)

void io_uring_prep_read(struct io_uring_sqe *sqe, int fd, void *buf, unsigned nbytes, __u64 offset);

// IORING_OP_READV

void io_uring_prep_readv(struct io_uring_sqe *sqe, int fd, const struct iovec *iovecs, unsigned nr_vecs, __u64 offset);

void io_uring_prep_readv2(struct io_uring_sqe *sqe, int fd, const struct iovec *iovecs,

unsigned nr_vecs, __u64 offset, int flags);

// IORING_OP_READV_FIXED

void io_uring_prep_read_fixed(struct io_uring_sqe *sqe, int fd, void *buf, unsigned nbytes, __u64 offset, int buf_index);

void io_uring_prep_shutdown(struct io_uring_sqe *sqe, int sockfd, int how);

void io_uring_prep_splice(struct io_uring_sqe *sqe, int fd_in, int64_t off_in, int fd_out,

int64_t off_out, unsigned int nbytes, unsigned int splice_flags);

// IORING_OP_SYNC_FILE_RANGE (5.2)

void io_uring_prep_sync_file_range(struct io_uring_sqe *sqe, int fd, unsigned len, __u64 offset, int flags);

// IORING_OP_TEE (5.8)

void io_uring_prep_tee(struct io_uring_sqe *sqe, int fd_in, int fd_out, unsigned int nbytes, unsigned int splice_flags);

// IORING_OP_WRITE (5.6)

void io_uring_prep_write(struct io_uring_sqe *sqe, int fd, const void *buf, unsigned nbytes, __u64 offset);

// IORING_OP_WRITEV

void io_uring_prep_writev(struct io_uring_sqe *sqe, int fd, const struct iovec *iovecs,

unsigned nr_vecs, __u64 offset);

void io_uring_prep_writev2(struct io_uring_sqe *sqe, int fd, const struct iovec *iovecs,

unsigned nr_vecs, __u64 offset, int flags);

// IORING_OP_WRITE_FIXED

void io_uring_prep_write_fixed(struct io_uring_sqe *sqe, int fd, const void *buf,

unsigned nbytes, __u64 offset, int buf_index);

// IORING_OP_CONNECT (5.5)

void io_uring_prep_connect(struct io_uring_sqe *sqe, int sockfd, const struct sockaddr *addr, socklen_t addrlen);

Operations on file descriptors

// IORING_OP_URING_CMD

void io_uring_prep_cmd_sock(struct io_uring_sqe *sqe, int cmd_op, int fd, int level,

int optname, void *optval, int optlen);

// IORING_OP_GETXATTR

void io_uring_prep_getxattr(struct io_uring_sqe *sqe, const char *name, char *value,

const char *path, unsigned int len);

// IORING_OP_SETXATTR

void io_uring_prep_setxattr(struct io_uring_sqe *sqe, const char *name, const char *value,

const char *path, int flags, unsigned int len);

// IORING_OP_FGETXATTR

void io_uring_prep_fgetxattr(struct io_uring_sqe *sqe, int fd, const char *name,

char *value, unsigned int len);

// IORING_OP_FSETXATTR

void io_uring_prep_fsetxattr(struct io_uring_sqe *sqe, int fd, const char *name,

const char *value, int flags, unsigned int len);

Manipulating directories

// IORING_OP_FSYNC

void io_uring_prep_fsync(struct io_uring_sqe *sqe, int fd, unsigned flags);

void io_uring_prep_linkat(struct io_uring_sqe *sqe, int olddirfd, const char *oldpath,

int newdirfd, const char *newpath, int flags);

void io_uring_prep_link(struct io_uring_sqe *sqe, const char *oldpath, const char *newpath, int flags);

void io_uring_prep_mkdirat(struct io_uring_sqe *sqe, int dirfd, const char *path, mode_t mode);

void io_uring_prep_mkdir(struct io_uring_sqe *sqe, const char *path, mode_t mode);

void io_uring_prep_rename(struct io_uring_sqe *sqe, const char *oldpath, const char *newpath, unsigned int flags);

void io_uring_prep_renameat(struct io_uring_sqe *sqe, int olddirfd, const char *oldpath,

int newdirfd, const char *newpath, unsigned int flags);

void io_uring_prep_statx(struct io_uring_sqe *sqe, int dirfd, const char *path, int flags,

unsigned mask, struct statx *statxbuf);

void io_uring_prep_symlink(struct io_uring_sqe *sqe, const char *target, const char *linkpath);

void io_uring_prep_symlinkat(struct io_uring_sqe *sqe, const char *target, int newdirfd, const char *linkpath);

void io_uring_prep_unlinkat(struct io_uring_sqe *sqe, int dirfd, const char *path, int flags);

void io_uring_prep_unlink(struct io_uring_sqe *sqe, const char *path, int flags);

Timeouts and polling

// IORING_OP_POLL_ADD

void io_uring_prep_poll_add(struct io_uring_sqe *sqe, int fd, unsigned poll_mask);

void io_uring_prep_poll_multishot(struct io_uring_sqe *sqe, int fd, unsigned poll_mask);

// IORING_OP_POLL_REMOVE

void io_uring_prep_poll_remove(struct io_uring_sqe *sqe, __u64 user_data);

void io_uring_prep_poll_update(struct io_uring_sqe *sqe, __u64 old_user_data, __u64 new_user_data, unsigned poll_mask, unsigned flags);

// IORING_OP_TIMEOUT

void io_uring_prep_timeout(struct io_uring_sqe *sqe, struct __kernel_timespec *ts, unsigned count, unsigned flags);

void io_uring_prep_timeout_update(struct io_uring_sqe *sqe, struct __kernel_timespec *ts, __u64 user_data, unsigned flags);

// IORING_OP_TIMEOUT_REMOVE

void io_uring_prep_timeout_remove(struct io_uring_sqe *sqe, __u64 user_data, unsigned flags);

Transring communication

All transring messages are prepared using the target uring's file descriptor, and thus aren't particularly compatible with the higher-level liburing API (of course, you can just yank ring_fd out of struct iouring). That's unfortunate, because this functionality is very useful in a multithreaded environment. Note that the ring to which the SQE is submitted can itself be the target of the message!

// IORING_OP_MSG_RING

void io_uring_prep_msg_ring(struct io_uring_sqe *sqe, int fd, unsigned int len, __u64 data, unsigned int flags);

void io_uring_prep_msg_ring_cqe_flags(struct io_uring_sqe *sqe, int fd, unsigned int len, __u64 data, unsigned int flags, unsigned int cqe_flags);

void io_uring_prep_msg_ring_fd(struct io_uring_sqe *sqe, int fd, int source_fd, int target_fd, __u64 data, unsigned int flags);

void io_uring_prep_msg_ring_fd_alloc(struct io_uring_sqe *sqe, int fd, int source_fd, __u64 data, unsigned int flags);

Futexes

// IORING_OP_FUTEX_WAKE

void io_uring_prep_futex_wake(struct io_uring_sqe *sqe, uint32_t *futex, uint64_t val,

uint64_t mask, uint32_t futex_flags, unsigned int flags);

// IORING_OP_FUTEX_WAIT

void io_uring_prep_futex_wait(struct io_uring_sqe *sqe, uint32_t *futex, uint64_t val,

uint64_t mask, uint32_t futex_flags, unsigned int flags);

// IORING_OP_FUTEX_WAITV

void io_uring_prep_futex_waitv(struct io_uring_sqe *sqe, struct futex_waitv *futex,

uint32_t nr_futex, unsigned int flags);

Cancellation

void io_uring_prep_cancel64(struct io_uring_sqe *sqe, __u64 user_data, int flags);

void io_uring_prep_cancel(struct io_uring_sqe *sqe, void *user_data, int flags);

void io_uring_prep_cancel_fd(struct io_uring_sqe *sqe, int fd, int flags);

Linked operations

IOSQE_IO_LINK (since 5.3) or IOSQE_IO_HARDLINK (since 5.5) can be supplied in the flags field of an SQE to link with the next SQE. The chain can be arbitrarily long (though it cannot cross submission boundaries), terminating in the first linked SQE without this flag set. Multiple chains can execute in parallel on the kernel side. Unless HARDLINK is used, any error terminates a chain; any remaining linked SQEs will be immediately cancelled (short reads/writes are considered errors) with return code -ECANCELED.

Sending it to the kernel

If IORING_SETUP_SQPOLL was provided when creating the uring, a thread was spawned to poll the submission queue. If the thread is awake, there is no need to make a system call; the kernel will ingest the SQE as soon as it is written (io_uring_submit(3) still must be used, but no system call will be made). This thread goes to sleep after sq_thread_idle milliseconds idle, in which case IORING_SQ_NEED_WAKEUP will be written to the flags field of the submission ring.

int io_uring_submit(struct io_uring *ring);

int io_uring_submit_and_wait(struct io_uring *ring, unsigned wait_nr);

int io_uring_submit_and_wait_timeout(struct io_uring *ring, struct io_uring_cqe **cqe_ptr, unsigned wait_nr,

struct __kernel_timespec *ts, sigset_t *sigmask);

int io_uring_submit_and_get_events(struct io_uring *ring);

io_uring_submit() and io_uring_submit_and_wait() are trivial wrappers around the internal __io_uring_submit_and_wait():

int io_uring_submit(struct io_uring *ring){

return __io_uring_submit_and_wait(ring, 0);

}

int io_uring_submit_and_wait(struct io_uring *ring, unsigned wait_nr){

return __io_uring_submit_and_wait(ring, wait_nr);

}

which is in turn, along with io_uring_submit_and_get_events(), a trivial wrapper around the internal __io_uring_submit() (calling __io_uring_flush_sq() along the way):

static int __io_uring_submit_and_wait(struct io_uring *ring, unsigned wait_nr){

return __io_uring_submit(ring, __io_uring_flush_sq(ring), wait_nr, false);

}

int io_uring_submit_and_get_events(struct io_uring *ring){

return __io_uring_submit(ring, __io_uring_flush_sq(ring), 0, true);

}

__io_uring_flush_sq() updates the ring tail with a release-semantics write, and __io_uring_submit() calls io_uring_enter() if necessary (when is it necessary? If wait_nr is non-zero, if getevents is true, if the ring was created with IORING_SETUP_IOPOLL, or if either the IORING_SQ_CQ_OVERFLOW or IORING_SQ_TASKRUN SQ ring flags are set. These last three conditions are encapsulated by the internal function cq_ring_needs_enter()). Note that timeouts are implemented internally using a SQE, and thus will kick off work if the submission ring is full pursuant to acquiring the entry.

When an actions requires a struct (examples would include IORING_OP_SENDMSG and its msghdr, and IORING_OP_TIMEOUT and its timespec64) as a parameter, that struct must remain valid until the SQE is submitted (though not completed), i.e. functions like io_uring_prep_sendmsg(3) do not perform deep copies of their arguments.

Submission queue polling details

Using IORING_SETUP_SQPOLL will, by default, create two threads in your process, one named iou-sqp-TID, and the other named iou-wrk-TID. The former is created the first time work is submitted. The latter is created whenever the uring is enabled (i.e. at creation time, unless IORING_SETUP_R_DISABLED is used). Submission queue poll threads can be shared between urings via IORING_SETUP_ATTACH_WQ together with the wq_fd field of io_uring_params.

These threads will be started with the same cgroup, CPU affinities, etc. as the calling thread, so watch out if you've already bound your thread to some CPU! You will now be competing with the poller threads for those CPUs' cycles, and if you're all on a single core, things will not go well.

Submission queue flags

| Flag | Description |

|---|---|

| IORING_SQ_NEED_WAKEUP | |

| IORING_SQ_CQ_OVERFLOW | The completion queue overflowed. See below for details on CQ overflows. |

| IORING_SQ_TASKRUN |

Reaping completions

Submitted actions result in completion events:

struct io_uring_cqe {

__u64 user_data; /* sqe->data submission passed back */

__s32 res; /* result code for this event */

__u32 flags;

/*

* If the ring is initialized with IORING_SETUP_CQE32, then this field

* contains 16-bytes of padding, doubling the size of the CQE.

*/

__u64 big_cqe[];

};

Recall that rather than using errno, errors are returned as their negative value in res.

| CQE flag | Description |

|---|---|

| IORING_CQE_F_BUFFER | If set, upper 16 bits of flags is the buffer ID |

| IORING_CQE_F_MORE | The associated multishot SQE will generate more entries |

| IORING_CQE_F_SOCK_NONEMPTY | There's more data to receive after this read |

| IORING_CQE_F_NOTIF | Notification CQE for zero-copy sends |

Completions can be detected by at least five different means:

- Checking the completion queue speculatively. This either means a periodic check, which will suffer latency up to the period, or a busy check, which will churn CPU, but is probably the lowest-latency portable solution. This is best accomplished with io_uring_peek_cqe(3), perhaps in conjunction with io_uring_cq_ready(3) (neither involves a system call).

int io_uring_peek_cqe(struct io_uring *ring, struct io_uring_cqe **cqe_ptr);

unsigned io_uring_cq_ready(const struct io_uring *ring);

- Waiting on the ring via kernel sleep. Use io_uring_wait_cqe(3) (unbounded sleep), io_uring_wait_cqe_timeout(3) (bounded sleep), or io_uring_wait_cqes(3) (bounded sleep with atomic signal blocking and batch receive). These do not require a system call if they can be immediately satisfied.

int io_uring_wait_cqe(struct io_uring *ring, struct io_uring_cqe **cqe_ptr);

int io_uring_wait_cqe_nr(struct io_uring *ring, struct io_uring_cqe **cqe_ptr, unsigned wait_nr);

int io_uring_wait_cqe_timeout(struct io_uring *ring, struct io_uring_cqe **cqe_ptr,

struct __kernel_timespec *ts);

int io_uring_wait_cqes(struct io_uring *ring, struct io_uring_cqe **cqe_ptr, unsigned wait_nr,

struct __kernel_timespec *ts, sigset_t *sigmask);

int io_uring_peek_cqe(struct io_uring *ring, struct io_uring_cqe **cqe_ptr);

- Using an eventfd together with io_uring_register_eventfd(3). See below for the full API. This eventfd can be combined with e.g. regular epoll.

- Polling the uring's file descriptor directly.

- Using processor-dependent memory watch instructions. On x86, there's MONITOR+MWAIT, but they require you to be in ring 0, so you'd probably want UMONITOR+UMWAIT. This ought allow a very low-latency wake that consumes very little power.

It's sometimes necessary to explicitly flush overflow tasks (or simply outstanding tasks in the presence of IORING_SETUP_DEFER_TASKRUN). This can be kicked off with io_uring_get_events(3):

int io_uring_get_events(struct io_uring *ring);

Once the CQE can be returned to the system, do so with io_uring_cqe_seen(3), or batch it with io_uring_cq_advance(3) (the former can mark cq entries as seen out of order).

void io_uring_cqe_seen(struct io_uring *ring, struct io_uring_cqe *cqe);

void io_uring_cq_advance(struct io_uring *ring, unsigned nr);

Multishot

It is possible for a single submission to result in multiple completions (e.g. io_uring_prep_multishot_accept(3)); this is known as multishot. Errors on a multishot SQE will typically terminate the work request; a multishot SQE will set IORING_CQE_F_MORE high in generated CQEs so long as it remains active. A CQE without this flag indicates that the multishot is no longer operational, and must be reposted if further events are desired. Overflow of the completion queue will usually result in a drop of any firing multishot.

Zerocopy

Since kernel 6.0, IORING_OP_SEND_ZC may be driven via io_uring_prep_send_zc(3) or io_uring_prep_send_zc_fixed(3) (the latter requires registered buffers). This will attempt to eliminate an intermediate copy, at the expense of requiring supplied data to remain unchanged longer. Elimination of the copy is not guaranteed. Protocols which do not support zerocopy will generally immediately fail with a res field of -EOPNOTSUPP. A successful zerocopy operation will result in two CQEs:

- a first CQE with standard res semantics and the IORING_CQE_F_MORE flag set, and

- a second CQE with res set to 0 and the IORING_CQE_F_NOTIF flag set.

Both CQEs will have the same user data field.

Corner cases

Single fd in multiple rings

If logically equivalent SQEs are submitted to different rings, only one operation seems to take place when logical. For instance, if two rings have the same socket added for an accept(2), a successful three-way TCP handshake will generate only one CQE, on one of the two rings. Which ring sees the event might be different from connection to connection.

Multithreaded use

urings (and especially the struct io_uring object of liburing) are not intended for multithreaded use (quoth Axboe, "don't share a ring between threads"), though they can be used in several threaded paradigms. A single thread submitting and some different single thread reaping is definitely supported. Descriptors can be sent among rings with IORING_OP_MSG_RING. Multiple submitters definitely must be serialized in userspace.

If an op will be completed via a kernel task, the thread that submitted that SQE must remain alive until the op's completion. It will otherwise error out with -ECANCELED. If you must submit the SQE from a thread which will die, consider creating it disabled (see IORING_SETUP_R_DISABLED), and enabling it from the thread which will reap the completion event using IORING_REGISTER_ENABLE_RINGS with io_uring_register(2).

If you can restrict all submissions (and creation/enabling of the uring) to a single thread, use IORING_SETUP_SINGLE_ISSUER to enable kernel optimizations. Otherwise, consider using io_uring_register_ring_fd(3) (or io_uring_register(2) directly) to register the ring descriptor with the ring itself, and thus reduce the overhead of io_uring_enter(2).

When IORING_SETUP_SQPOLL is used, the kernel poller thread is considered to have performed the submission, providing another possible way around this problem.

I am aware of no unannoying way to share some elements between threads in a single uring, while also monitoring distinct urings for each thread. I suppose you could poll on both.

Coexistence with epoll/XDP

If you want to monitor both an epoll and a uring in a single thread without busy waiting, you will run into problems. If you set a zero timeout, you're busy waiting; if you set a non-zero timeout, one is dependent on the other's readiness. There are three solutions:

- Add the epoll fd to your uring with IORING_OP_POLL_ADD (ideally multishot), and wait only for uring readiness. When you get a CQE for this submitted event, check the epoll.

- Poll on the uring's file descriptor directly for completion queue events.

- Register an eventfd with your uring with io_uring_register_eventfd(3), add that to your epoll, and when you get POLLIN for this fd, check the completion ring.

The full API here is:

int io_uring_register_eventfd(struct io_uring *ring, int fd);

int io_uring_register_eventfd_async(struct io_uring *ring, int fd);

int io_uring_unregister_eventfd(struct io_uring *ring);

io_uring_register_eventfd_async(3) only posts to the eventfd for events that completed out-of-line. There is not necessarily a bijection between completion events and posts even with the regular form; multiple CQEs can post only a single event, and spurious posts can occur.

Similarly, XDP's native means of notification is via poll(2); XDP can be unified with uring using any of these methods.

Queue overflows

The io_uring_prep_msg_ring(3) family of functions will generate a local CQE with the result code -EOVERFLOW if it is unable to fill a CQE on the target ring (when the submission and target ring are the same, I assume you get no CQE, but I'm not sure of this).

By default, the completion queue is twice the size of the submission queue, but it's still not difficult to overflow the completion queue. The size of the submission queue does not limit the number of iops in flight, but only the number that can be submitted at one time (the existence of multishot operations renders this moot).

CQ overflow can be detected by checking the submission queue's flags for IORING_SQ_CQ_OVERFLOW. The flag is eventually unset, though I'm not sure whether it's at the time of next submission or what FIXME. The io_uring_cq_has_overflow(3) function returns true if CQ overflow is waiting to be flushed to the queue:

bool io_uring_cq_has_overflow(const struct io_uring *ring);

What's missing

I'd like to see signalfds and pidfds integrated for purposes of linked operations (you can read from them with the existing infrastructure, but you can't create them, and thus can't link their creation to other events).

Why is there no vmsplice(2) action? How about fork(2)/pthread_create(3) (or more generally clone(2))? We could have coroutines with I/O.

It's kind of annoying that chains can't extend over submissions. If I've got a lot of data I need delivered in order, it seems I'm limited to a single chain in-flight at a time, or else I risk out-of-order delivery due to one chain aborting, followed by items from a subsequent chain succeeding. recv_multishot can help with this.

It seems that the obvious next step would be providing small snippets of arbitrary computation to be run in kernelspace, linked with SQEs. Perhaps eBPF would be a good starting place.

It would be awesome if I could indicate in each SQE some other uring to which the CQE ought be delivered, basically combining inter-ring messages and SQE submission. This would be the ne plus ultra IMHO of multithreaded uring (and be very much like my masters thesis, not that I claim any inspiration/precedence whatsoever for uring).

See also

External links

- Efficient IO with io_uring, Axboe's original paper, and io_uring and networking in 2023

- Lord of the io_uring by Shuveb Hussain

- Yarden Shafir's IoRing vs io_uring: A comparison of Windows and Linux implementations and I/O Rings—When One I/O is Not Enough

- why you should use io_uring for network io, Donald Hunter for Redhat 2023-04-12

- ioringapi at Microsoft

- Jakub Sitnicki of Cloudflare threw "Missing Manuals: io_uring worker pool" into the ring 2022-02-04

- "IORING_SETUP_SQPOLL_PERCPU status?" liburing issue 324

- "IORING_SETUP_IOPOLL, IORING_SETUP_SQPOLL impact on TCP read event latency" liburing issue 345

- and of course, mandatory LWN coverage:

- "The Rapid Growth of io_uring" 2020-01-24

- "Discriptorless files for io_uring" 2021-07-19

- "Zero-copy network transmission with io_uring" 2021-12-30