Check out my first novel, midnight's simulacra!

Pages

Every memory access on a virtual memory requires translation. These translations are typically cached in a Translation Lookaside Buffer (TLB). A TLB miss requires an expensive page table walk, requiring several memory accesses of its own. Larger pages mean more address space translated by each TLB and page table entry, and can thus lead to higher performance.

Linux does not allow deterministic use of huge pages without special privileges, so as not to allow denials of service. Using madvise(2) with MADV_HUGEPAGE (available since 2.6.38) indicates that the specified memory is suitable for transparent huge pages, but provides no feedback and guarantees nothing. The much newer (6.1) MADV_COLLAPSE performs a synchronous best-effort movement into transparent huge pages, and seems refreshingly general and robust. mmap(2) can specify MMAP_HUGETLB since 2.6.32, but pages must have already been made available by the administrator (the mapping still requires CAP_IPC_LOCK). Pages are made available via the sysfs interface, the kernel command line, or via mounting the hugetlbfs filesystem. This last provides named hugetlb-backed maps. shmget(2) since 2.6 has supported SHM_HUGETLB for shared memory segments.

Hardware

- PAE, PSE, PSE36, page tables, PTEs, TLB, MMU, PGD -- explain FIXME

UltraSPARC

- UltraSPARC I and II - four page sizes. one instruction TLB, one data TLB, each 64 fully-associative entries, each capable of using any of the four page sizes.

- UltraSPARC III (750MHz) - FIXME (upshot: just use native 8k pages; there's only 7 largepage TLB entries available to userspace)

- UltraSPARC III (900MHz+) - FIXME (upshot: things are fixed, go for it)

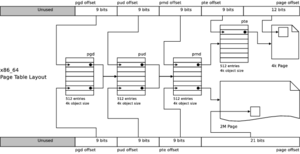

x86/amd64

- 4k default pages / 4M available

- 2M in PAE

- 1G on AMD processors implementing cpuid function 0x8000_0019 (see AMD Document 25481, "CPUID Specification", Revision 2.28)

- Relevant TLB descriptors are in EAX and EBX following CPUID.80000019. Unknown: are they per-core?

- Robert Collins's "Understanding Page Size Extensions on the Pentium Processor" and "Paging Extensions for the Pentium Pro Processor" on x86.org

ia64

- Virtually-Hashed Linear Page Table (VHPT)

- Itanium 1: 4k, 16k, 64k, 256k, 1M, 4M, 16M, 64M, 256M

- Itanium 2: 4K, 8K, 16K, 64K, 256K, 1M, 4M, 16M, 64M, 256M, 1G, 4G

- "On-The-Fly TLB Generation to Realize Variable Page Size Support on Linux/IA64" and the ia64SuperPages wiki entry at IA64wiki

- "Transparent Large-Page Support for Itanium Linux", the master's thesis of Ian Raymond

PowerPC

- Mega 16G pages!

- Also 4K, 64K, and 16M at last count...

Huge Pages

Making pages larger means fewer TLB misses for a given TLB size (due to more memory being supportable in the same number of pages, due to narrower page identifiers), large mapping/releasing operations will be faster (due to fewer page table entries needing to be handled), and less memory is devoted to page table entries for a given amount of memory being indexed. The downside is possible wastage of main memory (due to pages not being used as completely), and that disk-backed pages have a larger minimum unit to write out when dirty. A 2002 paper from Navarro et al at Rice proposed transparent operating system support: "Transparent Operating System Support for Superpages". Applications must generally be modified or wrapped to take advantage of large pages, for instance on Linux (through at least 2.6.30) and Solaris (through at least Solaris 9); FreeBSD (as of 7.2) claims transparent support with high performance.

Linux

- They were a 2003 Kernel Summit topic, after seeing first introduction in Linux 2.5.36 (LinuxGazette primer article)

- Rohit Seth provided the first explicit large page support to applications as covered in this LWN article

- alloc_hugepages(2), free_hugepages(2), get_large_pages(2) and shared_large_pages(2) were present in kernels 2.5.36-2.5.54

- hugetlbfs and assorted infrastructure replaced these. Mel Gorman's Linux MM wiki has a good page on hugetlbfs. With the CONFIG_HUGETLBFS kernel option enabled, the following variables are seen in /proc/meminfo (from 2.6.30 on amd64 with no hugepages reserved):

HugePages_Total: 0 HugePages_Free: 0 HugePages_Rsvd: 0 HugePages_Surp: 0 Hugepagesize: 2048 kB

- The hugepages= kernel parameter or /proc/sys/vm/nr_hugepages can be used to preallocate/release huge pages. From the same machine, with nr_hugepages=512:

HugePages_Total: 1024 HugePages_Free: 1016 HugePages_Rsvd: 1 HugePages_Surp: 0 Hugepagesize: 2048 kB

- Val Henson wrote a good 2006 KHB article in LWN on transparent largepage support

- Jonathan Corbet followed up with a relevant summary of the 2007 Kernel Summit's VM mini-summit

- There appears, as of Linux 2.6.30 and glibc 2.9, to exist no way to use shm_open(3) with huge pages under Linux

- One course, of can, directly open(2) and mmap(2) a file on a hugetlbfs filesystem

- If sysfs is mounted, each supported large pagesize will have a directory in /sys/kernel/mm/hugepages/:

[wopr](0) $ ls /sys/kernel/mm/hugepages/hugepages-2048kB/ free_hugepages nr_overcommit_hugepages surplus_hugepages nr_hugepages resv_hugepages [wopr](0) $

- Expansion via ftruncate(2) has been supported since Ken Chen's 2007-08-01 patch (or was it Zhang Yanmin's on 2006-03-08? -- either way, 2.6.16-era)

- libhugetlbfs uses LD_PRELOAD to back some calls (just malloc(3)?) with hugetlbfs accesses

- Patchsets by Eric B. Munson at IBM and Andrea Arcangeli are aiming at largely userspace-transparent hugetlb usage

Solaris

- Essential paper: "Supporting Multiple Page Sizes in the Solaris Operating System" (March 2004)

- Solaris 2.6 through Solaris 8 offered "intimate shared memory" (ISM) based of 4M pages, requested via shmat(2) with the SHM_SHARE_MMU flag

- Solaris 9 supported a variety of page sizes and introduced memcntl(2) to configure page sizes on a per-map basis

- The ppgsz(1) wrapper amd libmpss.so libraries allow configuration of heap/stack pagesizes on a per-app-instance basis

- The getpagesizes(2) system call has been added to discover multiple page sizes

- The Solaris Internals Wiki Multiple Page Size Support entry is excellent

FreeBSD

- FreeBSD 7.2, released May 2009, supports fully transparent "superpages"

- They must be enabled via setting loader tunable vm.pmap.pg_ps_enabled to 1

- See the thread entitled "Superpages?" on the freebsd-current mailing list

Applications

- MySQL can use hugetlbfs via the large-pages option

- kvm can use hugetlbfs with the --mem-path option since kvm-62, released in late 2008

- The Sun JVM makes transparent use of large pages since version 5.0

Page Clustering

Page clustering (implemented by William Lee Irwin for Linux in 2003, and not to be confused with page-granularity swap-out clustering). There's good coverage in this KernelTrap article. This is essentially huge pages without hardware support, and therefore with some overhead and no improvements in TLB-relative performance. It was written up in Irwin's 2003 OLS paper, "A 2.5 Page Clustering Implementation".

See Also

- Weisberg and Wiseman 2009, "Using 4KB Pages for Virtual Memory is Obsolete"

- "RFC: Transparent Hugepage support" Andrea Arcangeli on LKML, 2009-10-26